Premio AI Edge Inference Computers are high-performance computing solutions designed to process complex AI and deep learning workloads at the edge of the network. These computers utilize “EDGEBoost Node” technology for advanced accelerated to enable real-time data analysis, pattern recognition, and decision-making without relying on cloud-based resources.

AI Edge Inference Computers bring AI processing closer to the data source, reducing latency and enhancing real-time decision-making. This is crucial for applications where quick insights and responses are vital, such as industrial automation, surveillance, healthcare, and autonomous systems.

Premio's AI Edge Inference Computers cater to a wide range of industries including manufacturing, healthcare, transportation, retail, agriculture, energy, and more. Any industry that requires real-time rugged edge AI processing for enhanced efficiency, safety, and productivity can benefit from these computers.

Yes, our AI Edge Inference Computers offer customization options for performance accelerations to meet specific application requirements through our modular EDGEboost Nodes. From hardware configurations, BIOs, and OS installations, we work closely with clients to tailor solutions that fit their unique needs.

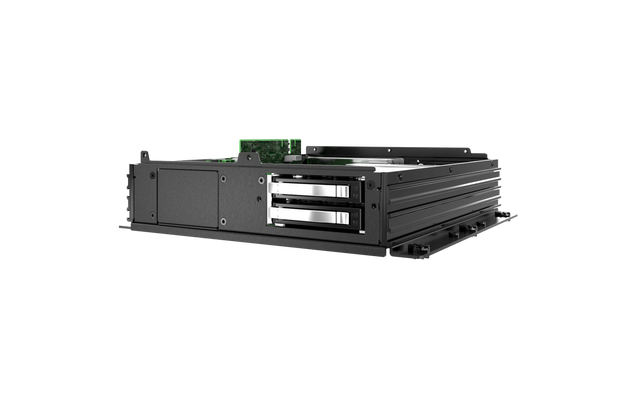

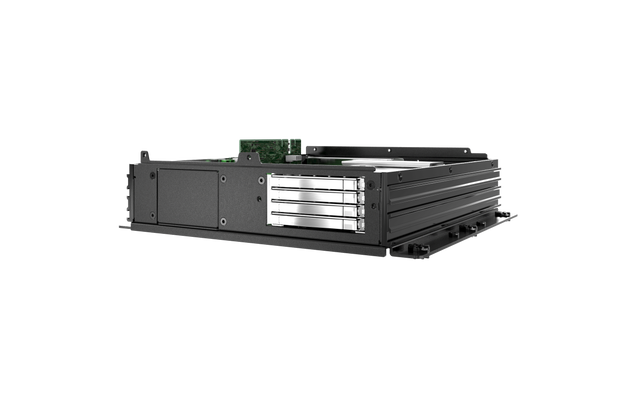

EDGEBoost Nodes are modular hardware performance accelerators for Premio’s RCO-6000 Series Industrial Computer. When paired together, EDGEBoost Nodes enable additional GPU and/or NVMe support, and PCIe/PCI expansion options for even greater performance at the rugged edge. Learn about EDGEBoost Nodes.

EDGEBoost I/O modules are proprietary daughterboards and carrier boards with a particular set of I/O that can be integrated into Premio industrial computers that feature an EDGEBoost bracket. The plug and play modules provide a wide variety of additional I/O support for serial and digital inputs. Learn more about “EDGEBoost I/O” Modules.

Premio places a strong emphasis on data security. Our computers come with built-in security features such as TPM 2.0 hardware-based encryption, secure boot, and remote management capabilities. These measures help protect sensitive data and ensure compliance with industry regulations.

Absolutely. Many of our AI Edge Inference Computers are designed to withstand harsh conditions, including extreme temperatures, shock, vibration, and fluctuations in power. These ruggedized solutions are ideal for applications in industrial automation, outdoor surveillance, remote monitoring, and intelligent transportation.

Premio offers comprehensive technical support and customer service. Our team of experts is available to assist with installation, configuration, troubleshooting, and customization to ensure you get the most out of your AI Edge Inference Computers. Please contact us here for technical support or email us at techsupport@premioinc.com

For more information and to explore our range of AI Edge Inference Computers, please visit our official website or contact our sales team at sales@premioinc.com . We're here to help you find the best solution for your AI processing needs at the edge.